Introduction

Now that you’ve received and made changes to your targets laid out in our previous post here, we will review alerting basics and how best to implement them. Do note, these are basic guidelines and every network is different. While we do recommend starting here, additional tweaks may be needed to dial in for the expected results.

Types of Alerts

There are two major types of alerts with two subtypes of alerts for each. The two major types of alerts are “Critical” and “Performance”. Critical alerts have two subtypes: up-down and down-up baseline and watermark, while performance alerts have: baseline and watermark.

Critical Alerts

These are what we call reachability or proactive detection type of alerts. They will notify you if a target is not responding to the test (ping, DNS, HTTP, and traceroute). This now also expands to scheduled tests such as speed test, iperf and VoIP as “error” or failed to run.

Typically these are Up/Down alerts where you want the test to be working and be notified if it is not. However, you can do Down/Up alerts to test and make sure something isn’t working as well. These are often used to test from external sources (i.e. cloud agents) against a network to be sure something isn’t reachable from the outside.

Performance Alerts

These alerts will let you know if the expected performance is reporting back below expectations. These can be applied to both real time testing (ping, DNS, HTTP, and traceroute) as well as network speed test, Iperf and VoIP. In most cases the two most common performance alerts are ping RTT and ping packet loss for real-time tests, but below are eight performance alerts that you can set:

For scheduled tests, you can alert on all of the throughput metrics that we report, as well as multiple statistics per test type with AND/OR statements as well. Below is an outline of what this looks like.

Watermark vs Baseline

Both of these have their uses in NetBeez, but it’s going to depend on both your network and your target configurations. watermark allows you to set specific metrics such as ping RTT or packet loss, or a Mbps on network speed tests. Then you can set a time period of running averages such as 1/5/15min for Ping tests or 1/2/3/20 consecutive speed tests average. Where this becomes tricky is if expected performance is going to vary greatly depending on the test or agent.

Alerts vs Incidents

To understand an incident we need to cover what an alert is. An alert is a fault or performance condition that relates to one single test, whether it’s real-time or a scheduled test. Incidents is when a certain percentage of tests within an agent, target, or Wi-Fi network trigger alerts. The default threshold for agents is set to 90% for an agent accident and 80% for a target incident to trigger.

What this means is that if 90% of a specific agent’s ping tests are failing, an incident is triggered. Whereas, if google.com test is failing on 80% of the agents, it will trigger a target incident. These are more often used to be notified of outages either on a resource/target or a site/location (agent).

General Best Practices

While there is not one size fits all, these are some basic guidelines we recommend:

- Ping: 12 consecutive failures (1 minute)

- DNS: 5 consecutive failures (2.5 Minutes)

- HTTP: 5 consecutive failures (5 minutes)

- Traceroute: Not recommended

Now these are baseline what we recommend across virtually all targets as a starting point. There are situations where traceroute may be critical to monitor. Or you have a mission critical application that needs 15 second (3x timeout) or 30 second (6x timeout) alert. The current default is 25 seconds (5x timeout), which in many cases is too tight or strict for many networks, especially when monitoring the WiFi.

Real Time Testing (Watermark or Baseline)

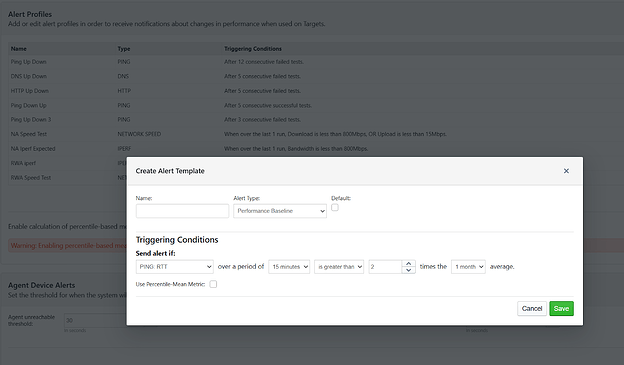

Not every test needs a performance alert. We recommend critical applications as well as scheduled tests. If you have strict SLA’s then a watermark alert is the go-to option. You can also create as many alerts as you like and assign specific thresholds to specific tests. Alternatively, you can set a baseline like this:

Let’s break down what this says and why I selected the options I did. First, the 15 minute period is preferred as well as 5 minutes as well. 1 Minute can be skewed too easily and 1h/4h is too long of a window. I also selected the 1 month average. We want a few skewed metrics being factored into the results. You can enable calculation of percentile-based mean, this will remove outliers but also requires significantly more storage. Then I selected less than and 0.5.

What this means is, in every running 15 minute window, if the average RTT is greater than 2x the monthly average, we need to be alerted. This is showing signs of performance degradation even though the test may not be outright failing.

Scheduled Tests (Watermark or Baseline)

Depending on your situation, a watermark or baseline may be the right fit for you. If you are monitoring a wide range of devices and expect a large variation in performance (e.g. Remote Worker Agents), then a baseline like this below would be ideal.

What this says is, if any single test comes back at half the 20 run average for download OR upload, send an alert. But say you’re an ISP and you must guarantee 900Mbps download speeds to your NDT Server, you could set up something like this:

Mistakes to Avoid

Just like people tend to overtarget as mentioned in our previous post, too aggressive alerting will lead to alert fatigue. You want the alerts to be meaningful, if you receive them, you should be taking action. We will talk more about how to dial this in more detail in a future post when we talk about integrations.

But you should be careful on ping in particular because it happens so frequently (every 5sec). You can change this on a test by test basis, but in order to calculate jitter/mos it needs to be 5s. We also do not recommend longer intervals than 15sec in most situations. You’re usually better setting the ping timeouts to 15 or 20 before changing the intervals for noncritical applications

Conclusion

NetBeez is an incredibly powerful tool that can alert you of any 5 second gap of reachability, small downtick in performance and does require proper tuning to get the maximum value out of it. Always start broad and work your way to be more aggressive is the recommendation.